Posts Tagged ‘search’

Description of ‘search’ Tag:

Thursday, July 28th, 2011

Meta tags are special HTML tags that contain information or Meta data that is readable by browsers, search engines and other programs. Meta data is commonly referred to as “data about data”. It is documented information about a specific set of data and is essentially used to describe the contents of a webpage. Meta tags only contain page information, they do not change the appearance of the page. These tags are placed in the heading section of HTML code and are only visible to users when viewing the source page. The following is how Meta tags appear in a website’s source code.

<HEAD>

<TITLE>How to Create a Meta Tag</TITLE>

<META name =“description” content=”Everything you want to know about Meta Tags”>

<META name =”keywords” content=”Keyword 1, Keyword 2, Keyword 3, Keyword 4”>

</HEAD>

from http://googlewebmastercentral.blogspot.com/2009/09/google-does-not-use-keywords-meta-tag.html , July 2011

Are Meta Tags helpful? Meta tags are usually used for Search Engine Optimization and are thought to help web crawlers index your pages more accurately. Although at one time Meta tags held tremendous value for website ranking they no longer carry the same importance. Over time, less and less weight has been assigned to Meta tags due to their manipulation and user abuse. Eventually websites began to perform keyword stuffing (excessive use of keywords) and listed irrelevant information in their Meta tags to try to attract and mislead traffic to their pages. As users caught on to the black hat SEO techniques for Meta tags the search engines started to divert their attention and place more emphasis on actual content. Shifting their focus allowed the search engines to evaluate web pages appropriately based on visible content rather than depending on a Meta tag to deliver accurate information.

Meta tags no longer play the same critical role in SEO as they once did, but that doesn’t necessarily mean that they are all worthless. It is believed that certain Meta tags can still generate greater… Read the rest

Tags: content, keyword, Meta, Meta Tag, Meta Tags, search, search engine, tag, tags, webrank

Posted in Search Engines, Web Development | No Comments »

Thursday, June 16th, 2011

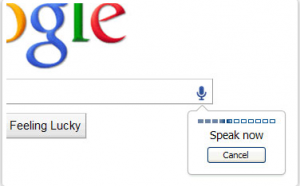

Eliminating the barriers that stand between users and information is an endless goal for Google. The company continues to revolutionize the way users search the internet and strives to provide only the most relevant and superior content possible. On Tuesday June 14, 2011, Google held a media event in San Francisco to announce their new features that they hope will drastically change how users will search the internet. Among the features that were announced were Instant Pages, Search by Image, and Voice Search.

from http://www.google.com/insidesearch/voicesearch.html, June 2011

At the event Google also shared their latest search statistics. The stats showed that mobile search is following the same usage pattern as the early years of Google desktop search. Within the past couple years mobile searching has grown tremendously and with technology like voice recognition, mobile searching is expected to grow even more. Google believes that voice recognition is a technology that shouldn’t be limited to the mobile experience and that is why Voice Search is now available on a desktop. Their voice recognition is currently comprised of over 230 billion words or phrases and each day their system captures over 2 years’ worth of voice searches.

Voice search allows users to effortlessly speak what they are looking for and Google will find it, searching doesn’t get any easier than that. Voice search also helps out when users need to perform searches that are longer, more complex or harder to spell. Google Chrome 11 or higher and a microphone are required to use Google Voice Search. From there, voice searching is as easy as clicking on the small microphone button located by the search bar and speaking your search terms.

Another feature that first staked its ground on mobile devices was Google Goggles. Google Goggles allows users to search by snapping a picture with their mobile device. Google search now lets users search places, art, and animals using images on desktop computers.… Read the rest

Tags: Chrome, Google, Google Chrome, Mobile, search, Search Results, voice recognition, voice search

Posted in Browser Modifications, Search Engines | No Comments »

Friday, April 22nd, 2011

Search engines take into consideration a large number of factors when assigning a rank to a website. Although there are a number of factors that are obvious or specifically identified as ranking factors, the majority of the ranking criteria are kept private. Search engines avoid publicizing the exact pieces of the Search Engine Optimization puzzle that they use to determine a rank because it would most likely lead to a massive overflow of irrelevant search results.

Search Engines recognize that if all the sites on the Internet knew the characteristics that they use, then every site would be able to rank higher. Providing this information would make it too easy for website and go against their main goal which is to provide users with the most relevant and useful search results. Clearly expressing the measurements that are used to rank a website would prevent a relevant site from standing out amongst the other results and could potentially place a higher rank on a page that is worst or is less relevant to a user.

Search Engines use both on-the page as well as off-page elements to assign a rank. On-page factors usually focus on internal site structure including keyword use and internal linking where as an off-page aspect considers who you link to, how you link to them, and the popularity or relevance of your links. Some of the factors that are known or thought to be important to ranking well in search engines include:

- Keyword in the pages Title Tag.

- Appropriate and descriptive anchor text of Inbound Links.

- Global Link Popularity of Site.

- Age of domain.

- Link Popularity or equity within the internal link Structure.

- Relevance of an inbound link to the site and the text surrounding that link.

- Keyword use throughout the body copy.

- The popularity or authority of the website providing an inbound link.

With that being said, one of the major factors that search engines do directly distinguish as being harmful to a Websites rank is Duplicate Content. Duplicate Content is best described as a portion or… Read the rest

Tags: content, duplicate, duplicate content, inbound link, link, rank, search, search engine, website

Posted in Internet Marketing, Search Engine Optimization SEO, Web Tips | No Comments »

Friday, April 8th, 2011

Released to a limited number of users on April 2, 2011 and later expanded for the remaining Google account users, Google +1 has introduced another way for users to recommend websites and share their preferences with the rest of the web.

Google +1

from www.google.com/+1/button

April 2011

The Plus One (+1) feature is not an entirely new concept and is very similar to the “Like” button on Facebook. The major difference seems to be the service’s name and the primary platform which it is utilized on. Similar to Facebook, users can use the +1 option to show that they recommend a website, both on Google as well as on the websites that provide the Plus One option through a widget. In order to take advantage of this new feature a user will need to create a Google account.

Once a user establishes a Google account they can begin selecting the +1 option that appears below each of the search results. After a user has placed their stamp of approval on a listing via the Plus One option, it will appear on a new tab in their Google profile. This newly created tab is where a user is able to manage all of the elements that they have chosen to +1. These options include the ability to remove a recommendation and to choose who has the authority to view the items that you have marked as Plus One. The +1 feature enables you to choose whether the +1’s (recommendations) are private, public, or customized to grant permission to specific users.

If a user decides to keep their Plus One item private they will still be added to the number of people who recommend the site however their profile name will remain hidden. For example below a search result it will display “54 people +1’d this” as opposed to their user name plus the number of other people who have chosen the Plus One option. Users will be able to… Read the rest

Tags: Facebook, Google, Google +1, like, search, search engine, search result, user

Posted in Internet Marketing, Search Engines, Social Media Marketing | 1 Comment »

Wednesday, March 16th, 2011

Google’s Blog recently announced a new method for customizing their user’s search experience, which will be available soon.

March 15, 2011 from http://googleblog.blogspot.com

Recognizing that the search results they provide may not be a perfect match, Google is now providing a search site-filter. Initially, Google released this feature as a part of their Google Chrome web browser in January 2011. Seeing that about 15% of Internet users browse using Google Chrome, Google recognized the benefit of offering this feature in their search engine in addition to Google Chrome (see Chrome market share).

Providing this personalized site-filtering feature enables users to avoid unrelated information and block undesirable websites from appearing in their search results. Whether it is because the website is offensive, low quality, or if a customer just doesn’t like it, they now have the option to exclude it from their search results. This feature is now available for people using Google Chrome version 9+, Internet Explorer 8+, and Firefox versions 3.5+ Internet browsers.

Search Results

Although this feature provides customers with a better search experience, could this be used to determine the future rank of a website? In their blog, Google states that they may eventually evaluate and use the data from this feature as a signal for ranking. Presently they are only attempting to offer customers an easy and more controlled search tool.

How It Will Work

When using a compatible browser the person performing the search will notice a “Block all example.com website results” next to the “cached” and “similar” links. These links are located next to the websites URL following the description. After receiving a confirmation request to block the site the results will be hidden until the user decides to deactivate the block. Once blocked, if the search results contain a blocked website a message is then displayed to the user stating some of the results have… Read the rest

Tags: browser, Chrome, filtering feature, Google, internet, results, search, site blocking, website

Posted in Browser, Search Engines | No Comments »

Friday, February 25th, 2011

The time that it takes for a website to load is commonly referred to as site speed. Site speed is measured from the moment a link is accessed or after a URL is entered into the browser search. Depending on the type of website or how heavy its content is, a site’s speed is typically between 2-5 seconds. At any rate below 2 seconds a website’s speed is thought to be excellent. A speed between 2-5 seconds might not be the fastest, however it is perfectly acceptable. Anything taking 5 seconds to load can annoy or turn away visitors and be less popular with search engines. The time that it takes for a website to load is commonly referred to as site speed. Site speed is measured from the moment a link is accessed or after a URL is entered into the browser search. Depending on the type of website or how heavy its content is, a site’s speed is typically between 2-5 seconds. At any rate below 2 seconds a website’s speed is thought to be excellent. A speed between 2-5 seconds might not be the fastest, however it is perfectly acceptable. Anything taking 5 seconds to load can annoy or turn away visitors and be less popular with search engines.

A site’s speed is only one of the many factors that search engines utilize in their website ranking algorithm. Search engines employ ranking algorithms in order to assign a page rank to a website and to ensure that a search returns with the best and most relevant results. The following are options that site owners can apply to their sites in order to reduce the time it takes for their website to load. Reducing a sites load time or increasing a sites speed will generate better customer responses as well as appeal more to search ranking algorithms.

Before attempting the following suggestions you should try out these free tools to see how your site measures up. Pingdom Load Time Test is an easy tool that allows you to assess the speed or your website. The program GTmtrix analyzes site speed, provides a grade for your website and generates a list of potential problems that they recommend that you fix. These are just two of the many free tools available to measure the performance of your website. Upon completion of these tests, if you are unsatisfied with your websites performance we recommend implementing the following changes.

Compress your Website:

Website data compression converts all of a website’s text elements that are written in HTML, CSS, and JavaScript into bundles. After the compression process is complete websites no longer have to send and recognize each individual file.… Read the rest

Tags: search, search engine, site, speed, website

Posted in Search Engine Optimization SEO | 1 Comment »

Friday, February 18th, 2011

WordPress is a web-based software that allows users to create and manage websites and blogs. The open source structure enables people from around the world to contribute to and maintain their WordPress website whether on WordPress’ web server or installed on their own web server. Although WordPress initially began as a blog tool it has developed into something so much more. By utilizing plug-ins, widgets, and themes users have the opportunity to fully customize their experience. A plug-in is software that can be added to existing software, like WordPress, which adds a new specific capability to the larger software application. For a full list of WordPress product features visit WordPress Product Features. WordPress is a web-based software that allows users to create and manage websites and blogs. The open source structure enables people from around the world to contribute to and maintain their WordPress website whether on WordPress’ web server or installed on their own web server. Although WordPress initially began as a blog tool it has developed into something so much more. By utilizing plug-ins, widgets, and themes users have the opportunity to fully customize their experience. A plug-in is software that can be added to existing software, like WordPress, which adds a new specific capability to the larger software application. For a full list of WordPress product features visit WordPress Product Features.

There are over thirteen thousand plug-ins available for the WordPress software making it extremely easy to tailor it to your specific uses. The following are some useful SEO plug-ins that the WordPress open source community has provided.

All-in-one SEO plug-ins such as WordPress SEO and SEO Scribe are designed to perform overall multiple SEO tasks that include optimizing content faster, providing great keywords, maintaining reader engagement, constructing quality links and increasing traffic! Most of the all in one SEO plug-ins operate similarly and perform similar functions. The following are among the most utilized all-in-one SEO WordPress plug-ins:

Below is a list of related, individual plug-ins that can be helpful in improving your blog or website’s Search Engine Optimization and enhancing Social Media Marketing:

AddToAny Share/Bookmark/Email:

AddToAny creates a customizable panel that showcases social networking sites. It makes it extremely easy and convenient for a visitor to submit to any social networking sites. The share menu organizes the social media sites according to most visited.

SEO Friendly Images:

The SEO Friendly Images WordPress plug-in automatically adds the “ALT” and “title” attributes to any images on your

… Read the rest

Tags: Blog Hints, link, plug, plugins, search, seo, site, title, Wordpress, wordpress plug ins

Posted in Blog Hints, Internet Marketing, Search Engine Optimization SEO, WordPress | No Comments »

Friday, February 11th, 2011

Advertising in any form has the goal to attract more customers and more revenue. Like advertising on television or in print ads, the world of online advertising shares the same purpose.

Basically online advertising sets out to attract customers by using the Internet, with websites and search engines as their medium. The Pay per Click (PPC) advertising model is where a host is compensated each time the promoter’s advertisement is clicked. The Cost per Click (CPC) is the actual amount that is paid out by the advertiser to either the search engine or website owner for each click that occurs. Websites that utilize this type of advertising will often present an advertisement or text link, when there is a common term between the websites keyword query and the advertiser’s keyword list. In other words, when a website has related information to the advertiser, a link will be displayed either on the side or above or in line with the website’s original content depending on the website. There are a couple different variations of pay per click online advertising which include Cost per Mille (CPM ), Cost per Visitor (CPV), Cost per Lead (CPL), Cost per Click (CPC), and Cost per Action (CPA).

When performing a search at a search engine you may have noticed that the results return with sponsored links. The sponsored links appear at the top or sides and the advertisers pay to be in these sponsored links sections. Each time a visitor accesses the site through this link, money is paid to the search engine through a prepaid account with that search engine. In order to achieve a sponsored link location websites, advertisers place bids for the amount of money they are prepared to pay per click. Since there are multiple link positions available, the highest bid is placed at the top, the next highest is placed second and so on. In addition to the websites being able to determine how much they pay per click they can also specify how much they… Read the rest

Tags: advertising, cost, engine, money, search, service, site, website

Posted in Internet Marketing, Search Engine Optimization SEO | No Comments »

Friday, February 4th, 2011

With SEO some primary goals include creating good content, increasing viewer usability, and promoting a website’s popularity, however there are certain practices out there that we avoid. These avoided practices are orchestrated to quickly or prematurely inflate rankings and take advantage of the factors that search engines utilize when ranking sites. These techniques are often referred to as Black Hat Techniques. With SEO some primary goals include creating good content, increasing viewer usability, and promoting a website’s popularity, however there are certain practices out there that we avoid. These avoided practices are orchestrated to quickly or prematurely inflate rankings and take advantage of the factors that search engines utilize when ranking sites. These techniques are often referred to as Black Hat Techniques.

While folks assume there are benefits of using such techniques, there are also major downsides. After using Black Hat techniques a website may temporarily hold a high rank, it will most likely not last very long. Some of the other immediate negative consequences include a site being banned, having penalties against it, and could result in web pages being wiped of their search rank value.

Although search engines devote resources and effort to putting a stop to these methods some still exist. Search engines have worked consistently to ensure that white hat methods remain dominant and discourage the use of black hat tactics.

There are quite a few SEO methods out there that can inadvertently land a website in the search engine dog house. Whether a site is flagged, given the penalties, banned, or you just want to know what to avoid here is a brief list of some of the Black Hat SEO methods to avoid as they could cause issues for a web site.

Cloaking is used to deceive the search engines, what a user sees is different compared to what a search engine robot (bot) would find. Although a site that is utilizing a cloaking technique is open to a penalty, or could be banned there are a few acceptable cloaking techniques called White Hat Cloaking.

Manipulative linking occurs in various forms and exploits search engine link popularity. A common method is using link farms, which is where a group of sites hyperlink to all other sites in the group and the process is generally automated. Some other examples of manipulative linking… Read the rest

Tags: black hat SEO, engine, internet, method, search, search engine, seo

Posted in Internet Marketing, Search Engine Optimization SEO | 1 Comment »

Friday, January 14th, 2011

Search Engine Optimization Fundamentals

Search Engine Optimization at its root is the process of increasing exposure to a website by enhancing the sites relevance and importance in the eyes of search engines. In order to gain this exposure or reach more customers the process of SEO sets out to make a website more rank worthy. The higher that a website is ranked on a search engine the more likely people are to access it.

A search engine has four main purposes that include Crawling, Indexing, Ranking, and Providing Results. The World Wide Web is a massive network where everything is connected through linking. A search engine navigates or crawls through the network locating web pages, images, or video files using mechanized devices called crawlers or spiders. These devices are launched, scouring the Internet, reading pieces of code from websites. The pieces of information copied by a crawler allow the searched location to be indexed or saved on one of their massive databases. Search Engine companies have data centers that hold the databases located all around the world in order to quickly recall the information upon request of the user. include Crawling, Indexing, Ranking, and Providing Results. The World Wide Web is a massive network where everything is connected through linking. A search engine navigates or crawls through the network locating web pages, images, or video files using mechanized devices called crawlers or spiders. These devices are launched, scouring the Internet, reading pieces of code from websites. The pieces of information copied by a crawler allow the searched location to be indexed or saved on one of their massive databases. Search Engine companies have data centers that hold the databases located all around the world in order to quickly recall the information upon request of the user.

In those first two tasks the search engine sought out and returned only the relevant pages. After searching and indexing, the next duty of the search engine is to calculate the indexed webpage’s relevance and determine its ranking. From here it assigns an importance which usually comes from the quantity and quality of the information as well as the webpage’s popularity. In addition there are many other ranking factors and various search engines value each factor differently. Once a rank is designated the page is then ready to be displayed for a user to access. Although all that is visible to us when performing a search are our search terms going in and the search results coming out, while behind-the-scenes there is a tremendous amount of action and effort going on to provide us with the information that we request.

According to Google these are some tips to get a better webpage ranking with them.

- Good webpage Content!

… Read the rest

Tags: engine, rank, ranking, ranking factor, seach engine, search

Posted in Search Engine Optimization SEO | 1 Comment »

|